8.8. Introducción a Deep Learning#

8.8.1. Clasificación con CNN en FashionMNIST#

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch.utils.data import DataLoader, TensorDataset

from torchvision import datasets, transforms

import random

import matplotlib.pyplot as plt

Se desea entrenar un modelo de clasificación de imágenes sobre el dataset FashionMNIST, que consiste en imágenes en escala de grises de tamaño \(28 \times 28\) pertenecientes a 10 clases de prendas de vestir. Cada ejemplo está dado por un par \((x_i, y_i)\), donde \(x_i \in [0,1]^{28 \times 28}\) es la imagen normalizada y \(y_i \in {0,\dots,9}\) es la etiqueta correspondiente (por ejemplo: remera, pantalón, zapatilla, etc.).

El objetivo es aprender una función \(f_\theta(x)\) que produzca una distribución de probabilidad sobre las clases, típicamente mediante una capa final con softmax: \(p(y \mid x) = \text{softmax}(f_\theta(x))\). El modelo se entrena minimizando la pérdida de entropía cruzada entre las predicciones y las etiquetas reales: \( \mathcal{L} = -\sum_i \log p(y_i \mid x_i)\), utilizando minibatches para optimizar eficientemente los parámetros mediante descenso por gradiente.

device = "cuda" if torch.cuda.is_available() else "cpu"

print(device)

torch.manual_seed(0)

batch_size = 128

lr = 1e-3

epochs = 10

# Datos

transform = transforms.Compose([

transforms.ToTensor(), # [0,1], shape: (1,28,28)

])

train_ds = datasets.FashionMNIST(root="./data", train=True, download=True, transform=transform)

test_ds = datasets.FashionMNIST(root="./data", train=False, download=True, transform=transform)

train_loader = DataLoader(train_ds, batch_size=batch_size, shuffle=True, num_workers=2, pin_memory=True)

test_loader = DataLoader(test_ds, batch_size=batch_size, shuffle=False, num_workers=2, pin_memory=True)

classes = ["Remera","Pantalón","Pulover","Vestido","Abrigo","Sandalia","Camisa","Zapatilla","Bolso","Bota"]

cpu

0.1%

0.2%

0.4%

0.5%

0.6%

0.7%

0.9%

1.0%

1.1%

1.2%

1.4%

1.5%

1.6%

1.7%

1.9%

2.0%

2.1%

2.2%

2.4%

2.5%

2.6%

2.7%

2.9%

3.0%

3.1%

3.2%

3.3%

3.5%

3.6%

3.7%

3.8%

4.0%

4.1%

4.2%

4.3%

4.5%

4.6%

4.7%

4.8%

5.0%

5.1%

5.2%

5.3%

5.5%

5.6%

5.7%

5.8%

6.0%

6.1%

6.2%

6.3%

6.4%

6.6%

6.7%

6.8%

6.9%

7.1%

7.2%

7.3%

7.4%

7.6%

7.7%

7.8%

7.9%

8.1%

8.2%

8.3%

8.4%

8.6%

8.7%

8.8%

8.9%

9.1%

9.2%

9.3%

9.4%

9.5%

9.7%

9.8%

9.9%

10.0%

10.2%

10.3%

10.4%

10.5%

10.7%

10.8%

10.9%

11.0%

11.2%

11.3%

11.4%

11.5%

11.7%

11.8%

11.9%

12.0%

12.2%

12.3%

12.4%

12.5%

12.6%

12.8%

12.9%

13.0%

13.1%

13.3%

13.4%

13.5%

13.6%

13.8%

13.9%

14.0%

14.1%

14.3%

14.4%

14.5%

14.6%

14.8%

14.9%

15.0%

15.1%

15.3%

15.4%

15.5%

15.6%

15.8%

15.9%

16.0%

16.1%

16.2%

16.4%

16.5%

16.6%

16.7%

16.9%

17.0%

17.1%

17.2%

17.4%

17.5%

17.6%

17.7%

17.9%

18.0%

18.1%

18.2%

18.4%

18.5%

18.6%

18.7%

18.9%

19.0%

19.1%

19.2%

19.3%

19.5%

19.6%

19.7%

19.8%

20.0%

20.1%

20.2%

20.3%

20.5%

20.6%

20.7%

20.8%

21.0%

21.1%

21.2%

21.3%

21.5%

21.6%

21.7%

21.8%

22.0%

22.1%

22.2%

22.3%

22.4%

22.6%

22.7%

22.8%

22.9%

23.1%

23.2%

23.3%

23.4%

23.6%

23.7%

23.8%

23.9%

24.1%

24.2%

24.3%

24.4%

24.6%

24.7%

24.8%

24.9%

25.1%

25.2%

25.3%

25.4%

25.5%

25.7%

25.8%

25.9%

26.0%

26.2%

26.3%

26.4%

26.5%

26.7%

26.8%

26.9%

27.0%

27.2%

27.3%

27.4%

27.5%

27.7%

27.8%

27.9%

28.0%

28.2%

28.3%

28.4%

28.5%

28.6%

28.8%

28.9%

29.0%

29.1%

29.3%

29.4%

29.5%

29.6%

29.8%

29.9%

30.0%

30.1%

30.3%

30.4%

30.5%

30.6%

30.8%

30.9%

31.0%

31.1%

31.3%

31.4%

31.5%

31.6%

31.7%

31.9%

32.0%

32.1%

32.2%

32.4%

32.5%

32.6%

32.7%

32.9%

33.0%

33.1%

33.2%

33.4%

33.5%

33.6%

33.7%

33.9%

34.0%

34.1%

34.2%

34.4%

34.5%

34.6%

34.7%

34.8%

35.0%

35.1%

35.2%

35.3%

35.5%

35.6%

35.7%

35.8%

36.0%

36.1%

36.2%

36.3%

36.5%

36.6%

36.7%

36.8%

37.0%

37.1%

37.2%

37.3%

37.5%

37.6%

37.7%

37.8%

37.9%

38.1%

38.2%

38.3%

38.4%

38.6%

38.7%

38.8%

38.9%

39.1%

39.2%

39.3%

39.4%

39.6%

39.7%

39.8%

39.9%

40.1%

40.2%

40.3%

40.4%

40.6%

40.7%

40.8%

40.9%

41.1%

41.2%

41.3%

41.4%

41.5%

41.7%

41.8%

41.9%

42.0%

42.2%

42.3%

42.4%

42.5%

42.7%

42.8%

42.9%

43.0%

43.2%

43.3%

43.4%

43.5%

43.7%

43.8%

43.9%

44.0%

44.2%

44.3%

44.4%

44.5%

44.6%

44.8%

44.9%

45.0%

45.1%

45.3%

45.4%

45.5%

45.6%

45.8%

45.9%

46.0%

46.1%

46.3%

46.4%

46.5%

46.6%

46.8%

46.9%

47.0%

47.1%

47.3%

47.4%

47.5%

47.6%

47.7%

47.9%

48.0%

48.1%

48.2%

48.4%

48.5%

48.6%

48.7%

48.9%

49.0%

49.1%

49.2%

49.4%

49.5%

49.6%

49.7%

49.9%

50.0%

50.1%

50.2%

50.4%

50.5%

50.6%

50.7%

50.8%

51.0%

51.1%

51.2%

51.3%

51.5%

51.6%

51.7%

51.8%

52.0%

52.1%

52.2%

52.3%

52.5%

52.6%

52.7%

52.8%

53.0%

53.1%

53.2%

53.3%

53.5%

53.6%

53.7%

53.8%

53.9%

54.1%

54.2%

54.3%

54.4%

54.6%

54.7%

54.8%

54.9%

55.1%

55.2%

55.3%

55.4%

55.6%

55.7%

55.8%

55.9%

56.1%

56.2%

56.3%

56.4%

56.6%

56.7%

56.8%

56.9%

57.0%

57.2%

57.3%

57.4%

57.5%

57.7%

57.8%

57.9%

58.0%

58.2%

58.3%

58.4%

58.5%

58.7%

58.8%

58.9%

59.0%

59.2%

59.3%

59.4%

59.5%

59.7%

59.8%

59.9%

60.0%

60.1%

60.3%

60.4%

60.5%

60.6%

60.8%

60.9%

61.0%

61.1%

61.3%

61.4%

61.5%

61.6%

61.8%

61.9%

62.0%

62.1%

62.3%

62.4%

62.5%

62.6%

62.8%

62.9%

63.0%

63.1%

63.2%

63.4%

63.5%

63.6%

63.7%

63.9%

64.0%

64.1%

64.2%

64.4%

64.5%

64.6%

64.7%

64.9%

65.0%

65.1%

65.2%

65.4%

65.5%

65.6%

65.7%

65.9%

66.0%

66.1%

66.2%

66.3%

66.5%

66.6%

66.7%

66.8%

67.0%

67.1%

67.2%

67.3%

67.5%

67.6%

67.7%

67.8%

68.0%

68.1%

68.2%

68.3%

68.5%

68.6%

68.7%

68.8%

69.0%

69.1%

69.2%

69.3%

69.5%

69.6%

69.7%

69.8%

69.9%

70.1%

70.2%

70.3%

70.4%

70.6%

70.7%

70.8%

70.9%

71.1%

71.2%

71.3%

71.4%

71.6%

71.7%

71.8%

71.9%

72.1%

72.2%

72.3%

72.4%

72.6%

72.7%

72.8%

72.9%

73.0%

73.2%

73.3%

73.4%

73.5%

73.7%

73.8%

73.9%

74.0%

74.2%

74.3%

74.4%

74.5%

74.7%

74.8%

74.9%

75.0%

75.2%

75.3%

75.4%

75.5%

75.7%

75.8%

75.9%

76.0%

76.1%

76.3%

76.4%

76.5%

76.6%

76.8%

76.9%

77.0%

77.1%

77.3%

77.4%

77.5%

77.6%

77.8%

77.9%

78.0%

78.1%

78.3%

78.4%

78.5%

78.6%

78.8%

78.9%

79.0%

79.1%

79.2%

79.4%

79.5%

79.6%

79.7%

79.9%

80.0%

80.1%

80.2%

80.4%

80.5%

80.6%

80.7%

80.9%

81.0%

81.1%

81.2%

81.4%

81.5%

81.6%

81.7%

81.9%

82.0%

82.1%

82.2%

82.3%

82.5%

82.6%

82.7%

82.8%

83.0%

83.1%

83.2%

83.3%

83.5%

83.6%

83.7%

83.8%

84.0%

84.1%

84.2%

84.3%

84.5%

84.6%

84.7%

84.8%

85.0%

85.1%

85.2%

85.3%

85.4%

85.6%

85.7%

85.8%

85.9%

86.1%

86.2%

86.3%

86.4%

86.6%

86.7%

86.8%

86.9%

87.1%

87.2%

87.3%

87.4%

87.6%

87.7%

87.8%

87.9%

88.1%

88.2%

88.3%

88.4%

88.5%

88.7%

88.8%

88.9%

89.0%

89.2%

89.3%

89.4%

89.5%

89.7%

89.8%

89.9%

90.0%

90.2%

90.3%

90.4%

90.5%

90.7%

90.8%

90.9%

91.0%

91.2%

91.3%

91.4%

91.5%

91.6%

91.8%

91.9%

92.0%

92.1%

92.3%

92.4%

92.5%

92.6%

92.8%

92.9%

93.0%

93.1%

93.3%

93.4%

93.5%

93.6%

93.8%

93.9%

94.0%

94.1%

94.3%

94.4%

94.5%

94.6%

94.8%

94.9%

95.0%

95.1%

95.2%

95.4%

95.5%

95.6%

95.7%

95.9%

96.0%

96.1%

96.2%

96.4%

96.5%

96.6%

96.7%

96.9%

97.0%

97.1%

97.2%

97.4%

97.5%

97.6%

97.7%

97.9%

98.0%

98.1%

98.2%

98.3%

98.5%

98.6%

98.7%

98.8%

99.0%

99.1%

99.2%

99.3%

99.5%

99.6%

99.7%

99.8%

100.0%

100.0%

100.0%

0.7%

1.5%

2.2%

3.0%

3.7%

4.4%

5.2%

5.9%

6.7%

7.4%

8.2%

8.9%

9.6%

10.4%

11.1%

11.9%

12.6%

13.3%

14.1%

14.8%

15.6%

16.3%

17.0%

17.8%

18.5%

19.3%

20.0%

20.7%

21.5%

22.2%

23.0%

23.7%

24.5%

25.2%

25.9%

26.7%

27.4%

28.2%

28.9%

29.6%

30.4%

31.1%

31.9%

32.6%

33.3%

34.1%

34.8%

35.6%

36.3%

37.1%

37.8%

38.5%

39.3%

40.0%

40.8%

41.5%

42.2%

43.0%

43.7%

44.5%

45.2%

45.9%

46.7%

47.4%

48.2%

48.9%

49.6%

50.4%

51.1%

51.9%

52.6%

53.4%

54.1%

54.8%

55.6%

56.3%

57.1%

57.8%

58.5%

59.3%

60.0%

60.8%

61.5%

62.2%

63.0%

63.7%

64.5%

65.2%

65.9%

66.7%

67.4%

68.2%

68.9%

69.7%

70.4%

71.1%

71.9%

72.6%

73.4%

74.1%

74.8%

75.6%

76.3%

77.1%

77.8%

78.5%

79.3%

80.0%

80.8%

81.5%

82.3%

83.0%

83.7%

84.5%

85.2%

86.0%

86.7%

87.4%

88.2%

88.9%

89.7%

90.4%

91.1%

91.9%

92.6%

93.4%

94.1%

94.8%

95.6%

96.3%

97.1%

97.8%

98.6%

99.3%

100.0%

100.0%

Modelo CNN#

Se propone una red neuronal convolucional (CNN) para clasificar imágenes de FashionMNIST, aprovechando la estructura espacial de las imágenes. El modelo recibe como entrada tensores \(x \in \mathbb{R}^{1 \times 28 \times 28}\) y aplica dos capas convolucionales: la primera transforma la entrada a 16 mapas de características manteniendo resolución espacial, y la segunda produce 32 mapas. Cada convolución es seguida por una activación no lineal \(\text{ReLU}(z) = \max(0, z)\) y una operación de max pooling que reduce la dimensión espacial (de \(28 \times 28\) a \(14 \times 14\), y luego a \(7 \times 7\)). Luego, los mapas se aplanan en un vector y se procesan con capas totalmente conectadas: \(32 \cdot 7 \cdot 7 \rightarrow 128 \rightarrow 10\), donde la salida final son logits \(f_\theta(x) \in \mathbb{R}^{10}\). Estos logits se transforman implícitamente en probabilidades mediante softmax: \(p(y \mid x) = \text{softmax}(f_\theta(x))\), y el modelo se entrena minimizando la pérdida de entropía cruzada. La métrica de desempeño es la exactitud, calculada como la proporción de predicciones correctas: \(\text{acc} = \frac{1}{N} \sum_i \mathbf{1}(\hat{y}_i = y_i)\).

# Modelo Red Neuronal Convolucional

class SmallCNN(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = nn.Conv2d(1, 16, kernel_size=3, padding=1) # 28x28 -> 28x28

self.conv2 = nn.Conv2d(16, 32, kernel_size=3, padding=1) # 14x14 -> 14x14

self.fc1 = nn.Linear(32 * 7 * 7, 128)

self.fc2 = nn.Linear(128, 10) # 10 clases

def forward(self, x):

x = F.relu(self.conv1(x))

x = F.max_pool2d(x, 2) # 28x28 -> 14x14

x = F.relu(self.conv2(x))

x = F.max_pool2d(x, 2) # 14x14 -> 7x7

x = x.view(x.size(0), -1) # flatten

x = F.relu(self.fc1(x))

return self.fc2(x) # logits

model = SmallCNN().to(device)

# Pérdida y optimizador

criterion = nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(model.parameters(), lr=lr)

# Entrenamiento

def accuracy(loader):

model.eval()

correct = 0

total = 0

with torch.no_grad():

for x, y in loader:

x, y = x.to(device), y.to(device)

logits = model(x)

pred = logits.argmax(dim=1)

correct += (pred == y).sum().item()

total += y.numel()

model.train()

return correct / total

Loop de entrenamiento#

for epoch in range(1, epochs + 1):

running_loss = 0.0

for x, y in train_loader:

x, y = x.to(device), y.to(device)

optimizer.zero_grad()

logits = model(x)

loss = criterion(logits, y)

loss.backward()

optimizer.step()

running_loss += loss.item() * x.size(0)

train_loss = running_loss / len(train_ds)

test_acc = accuracy(test_loader)

print(f"Epoch {epoch}/{epochs} | loss: {train_loss:.4f} | test acc: {test_acc:.4f}")

Epoch 1/10 | loss: 0.5964 | test acc: 0.8314

Epoch 2/10 | loss: 0.3507 | test acc: 0.8753

Epoch 3/10 | loss: 0.3044 | test acc: 0.8874

Epoch 4/10 | loss: 0.2736 | test acc: 0.8887

Epoch 5/10 | loss: 0.2514 | test acc: 0.8982

Epoch 6/10 | loss: 0.2353 | test acc: 0.8955

Epoch 7/10 | loss: 0.2199 | test acc: 0.9065

Epoch 8/10 | loss: 0.2099 | test acc: 0.9095

Epoch 9/10 | loss: 0.1956 | test acc: 0.9123

Epoch 10/10 | loss: 0.1840 | test acc: 0.9119

Visualización de resultados#

model.eval().cpu()

# Elegir 10 imágenes aleatorias del conjunto de test

indices = random.sample(range(len(test_ds)), 10)

plt.figure(figsize=(12, 5))

for i, idx in enumerate(indices):

x, y_true = test_ds[idx]

with torch.no_grad():

logits = model(x.unsqueeze(0))

y_pred = logits.argmax(dim=1).item()

plt.subplot(2, 5, i + 1)

plt.imshow(x.squeeze(), cmap="gray")

plt.title(f"Pred:{classes[y_pred]}")

plt.axis("off")

plt.suptitle("Clasificación de imágenes de ropa (Fashion-MNIST)")

plt.tight_layout()

plt.show()

8.8.2. Regresión: Oscilador Amortiguado#

import math

import numpy as np

import torch

import torch.nn as nn

import matplotlib.pyplot as plt

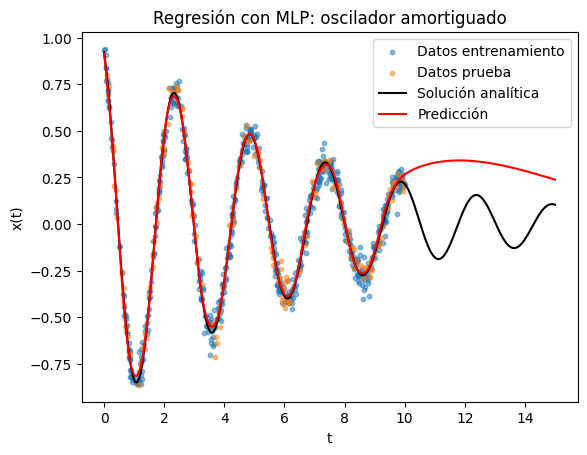

Un oscilador armónico amortiguado está gobernado por la ecuación diferencial

$\( m \ddot{x} + c \dot{x} + k x = 0 \)$,

que puede reescribirse como \(\ddot{x} + 2\gamma \dot{x} + \omega_0^2 x = 0\), donde \(\gamma = \frac{c}{2m}\) es el coeficiente de amortiguamiento y \(\omega_0 = \sqrt{\frac{k}{m}}\) la frecuencia natural.

En el régimen subamortiguado (\( \gamma < \omega_0\)), la solución es \( x(t) = A e^{-\gamma t} \cos(\omega t + \phi)\), con \( \omega = \sqrt{\omega_0^2 - \gamma^2}\).

En el dataset, se generan muestras \((t_i, y_i)\) evaluando esta solución en tiempos aleatorios \(t_i \in [0,10]\), y luego se agrega ruido gaussiano: \(y_i = x(t_i) + \varepsilon_i\), con \( \varepsilon_i \sim \mathcal{N}(0, \sigma^2)\), simulando mediciones experimentales imperfectas.

# Dataset de oscilador sintético

rng = np.random.default_rng(0)

A = 1.0 # amplitud

gamma = 0.15 # amortiguamiento (γ)

omega = 2.5 # frecuencia angular (ω)

phi = 0.4 # fase (φ)

def x_true(t):

return A * np.exp(-gamma * t) * np.cos(omega * t + phi)

N = 800

t = rng.uniform(0.0, 10.0, size=(N, 1)).astype(np.float32)

y = x_true(t).astype(np.float32)

noise_std = 0.05

y_noisy = y + noise_std * rng.normal(size=y.shape).astype(np.float32)

# Train/test split

idx = rng.permutation(N)

n_train = int(0.8 * N)

train_idx, test_idx = idx[:n_train], idx[n_train:]

t_train, y_train = t[train_idx], y_noisy[train_idx]

t_test, y_test = t[test_idx], y_noisy[test_idx]

# Estandarizar entrada (t) ayuda al entrenamiento

t_mean, t_std = t_train.mean(axis=0, keepdims=True), t_train.std(axis=0, keepdims=True) + 1e-8

t_train_s = (t_train - t_mean) / t_std

t_test_s = (t_test - t_mean) / t_std

# Tensores

t_train_t = torch.from_numpy(t_train_s)

y_train_t = torch.from_numpy(y_train)

t_test_t = torch.from_numpy(t_test_s)

y_test_t = torch.from_numpy(y_test)

Modelo MLP de regresión#

Queremos ajustar un perceptrón multicapa (MLP) para aproximar la relación entre el tiempo \(t\) y la posición \(x(t)\) del oscilador a partir de datos ruidosos. El modelo recibe como entrada un escalar \(t\) (previamente estandarizado) y produce una predicción \(\hat{x}(t)\). La arquitectura consiste en una red totalmente conectada de tres capas lineales con activaciones no lineales \(\tanh\), lo que le permite capturar tanto la oscilación como el decaimiento exponencial de la señal. El entrenamiento se realiza minimizando una función de pérdida (por ejemplo, MSE) entre las predicciones del modelo y las observaciones \(y_i\).

class MLP(nn.Module):

def __init__(self):

super().__init__()

self.net = nn.Sequential(

nn.Linear(1, 64),

nn.Tanh(),

nn.Linear(64, 64),

nn.Tanh(),

nn.Linear(64, 1),

)

def forward(self, x):

return self.net(x)

torch.manual_seed(0)

model = MLP()

criterion = nn.MSELoss()

optimizer = torch.optim.Adam(model.parameters(), lr=1e-3)

Loop de entrenamiento#

batch_size = 128

epochs = 1500

for epoch in range(1, epochs + 1):

# mini-batch simple

perm = torch.randperm(t_train_t.size(0))

for i in range(0, t_train_t.size(0), batch_size):

idxb = perm[i:i+batch_size]

xb = t_train_t[idxb]

yb = y_train_t[idxb]

optimizer.zero_grad()

pred = model(xb)

loss = criterion(pred, yb)

loss.backward()

optimizer.step()

if epoch in {1, 200, 500, 1000, 1500}:

with torch.no_grad():

test_pred = model(t_test_t)

rmse = torch.sqrt(torch.mean((test_pred - y_test_t) ** 2)).item()

print(f"Epoch {epoch:4d} | Test RMSE: {rmse:.4f}")

Epoch 1 | Test RMSE: 0.3902

Epoch 200 | Test RMSE: 0.3766

Epoch 500 | Test RMSE: 0.3245

Epoch 1000 | Test RMSE: 0.0645

Epoch 1500 | Test RMSE: 0.0517

Visualización de los resultados#

# Curva ordenada en el tiempo para graficar suave

t_grid = np.linspace(0.0, 10.0, 600, dtype=np.float32).reshape(-1, 1)

t_extra = np.linspace(0.0, 15.0, 700, dtype=np.float32).reshape(-1, 1)

t_extra_s = (t_extra - t_mean) / t_std

with torch.no_grad():

y_grid_pred = model(torch.from_numpy(t_extra_s)).numpy()

plt.figure()

plt.scatter(t_train, y_train, s=10, alpha=0.5, label="Datos entrenamiento")

plt.scatter(t_test, y_test, s=10, alpha=0.5, label="Datos prueba")

plt.plot(t_extra, x_true(t_extra), '-', c="black", label="Solución analítica")

plt.plot(t_extra, y_grid_pred, 'r-', label="Predicción")

plt.xlabel("t")

plt.ylabel("x(t)")

plt.title("Regresión con MLP: oscilador amortiguado")

plt.legend()

plt.show()

8.8.3. Redes Neuronales Recurrentes#

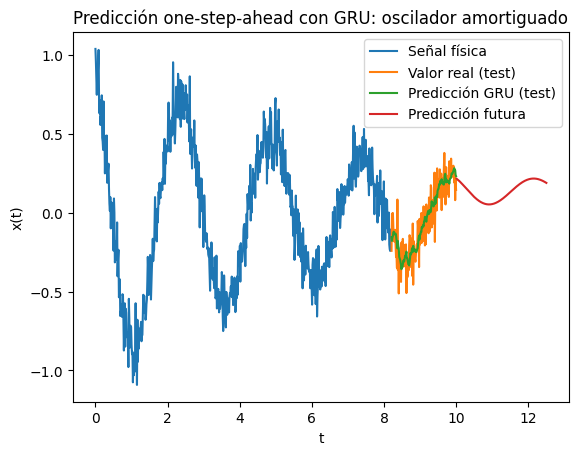

Ahora, en vez de abordar este problema usando una MLP (Perceptrón Multicapa), utilizaremos una Gated Recurrent Unit GRU, que está especialmente diseñada para trabajar con secuencias y dependencias temporales, por lo que resulta una alternativa natural para series de tiempo como ésta.

Construiremos un dataset de ventanas temporales, usando una longitud de contexto fija \(L\), de modo que cada secuencia de entrada contenga los últimos \(L\) puntos y la salida sea el siguiente valor.

# Usamos una ventana de longitud L para predecir el siguiente punto.

L = 80 # longitud de contexto

N = 800

def make_windows(series, L):

X, Y = [], []

series = series.squeeze()

for i in range(len(series) - L):

X.append(series[i:i+L])

Y.append(series[i+L])

return torch.vstack(X).unsqueeze(-1), torch.vstack(Y)

t_ordered = torch.linspace(0,10,N)

y_ordered = x_true(t_ordered)

noise_std = 0.1

y_noisy_o = y_ordered + noise_std * torch.randn(size=y_ordered.shape)

y_noisy_mean, y_noisy_std = y_noisy_o.mean(), y_noisy_o.std()

y_noisy_n = (y_noisy_o - y_noisy_mean)/y_noisy_std

X_all, y_all = make_windows(y_noisy_n, L) # X_all: (N, L, 1), y_all: (N, 1)

print(X_all.shape, y_all.shape)

torch.Size([720, 80, 1]) torch.Size([720, 1])

Definiremos y entrenaremos una GRU para resolver el problema de regresión secuencial. En este caso, evaluaremos el error en test mediante MSE.

# Train/test split respetando el orden temporal

N = X_all.shape[0]

n_train = int(0.8 * N)

X_train, y_train = X_all[:n_train], y_all[:n_train]

X_test, y_test = X_all[n_train:], y_all[n_train:]

train_dataset = TensorDataset(X_train, y_train)

batch_size = 32

train_dataloader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

# Modelo recurrente (GRU)

class GRURegressor(nn.Module):

def __init__(self, hidden_size=32, num_layers=1):

super().__init__()

self.rnn = nn.GRU(

input_size=1,

hidden_size=hidden_size,

num_layers=num_layers,

batch_first=True

)

self.head = nn.Linear(hidden_size, 1)

def forward(self, x):

# x: (B, L, 1)

out, _ = self.rnn(x) # out: (B, L, H)

h_last = out[:, -1, :] # último estado oculto: (B, H)

y_hat = self.head(h_last) # (B, 1)

return y_hat

torch.manual_seed(0)

model = GRURegressor(hidden_size=64)

criterion = nn.MSELoss()

optimizer = torch.optim.Adam(model.parameters(), lr=1e-3)

# Entrenamiento

epochs = 30

for epoch in range(epochs):

model.train()

for xb, yb in train_dataloader:

optimizer.zero_grad()

pred = model(xb)

loss = criterion(pred, yb)

loss.backward()

optimizer.step()

if epoch in list(range(0,epochs,5)):

model.eval()

with torch.no_grad():

pred_test = model(X_test)

rmse = torch.sqrt(torch.mean((pred_test - y_test) ** 2)).item()

print(f"Epoch {epoch:2d} | Test RMSE (norm): {rmse:.4f}")

Epoch 0 | Test RMSE (norm): 0.3952

Epoch 5 | Test RMSE (norm): 0.2705

Epoch 10 | Test RMSE (norm): 0.2707

Epoch 15 | Test RMSE (norm): 0.2705

Epoch 20 | Test RMSE (norm): 0.2716

Epoch 25 | Test RMSE (norm): 0.2681

Comparamos visualmente la señal con las predicciones del modelo.

# Visualización: predicción vs real en el tramo de test

model.eval()

with torch.no_grad():

yhat_test = model(X_test).squeeze(-1) # normalizado

y_test = y_test.squeeze(-1)

# Desnormalizar para graficar en unidades originales

yhat_test_u = yhat_test * y_noisy_std + y_noisy_mean

y_test_u = y_test * y_noisy_std + y_noisy_mean

y_train_u = y_train * y_noisy_std + y_noisy_mean

# Tiempo del tramo de test

t_test = t_ordered[L + n_train : L + n_train + len(y_test_u)]

ventana = y_test[-L:].unsqueeze(0).unsqueeze(-1)

preds = []

dt = (t_ordered[1:] - t_ordered[0:-1])[-1].item()

t_preds = [t_ordered[-1].item()]

with torch.no_grad():

for i in range(200):

y_pred = model(ventana)

preds.append(y_pred.item())

ventana = torch.cat([ventana[:,1:,:], y_pred.unsqueeze(1)], dim=1)

t_preds.append(t_preds[-1] + dt)

y_pred_n = torch.tensor(preds)

y_pred = (y_pred_n * y_noisy_std + y_noisy_mean)

plt.figure()

plt.plot(t_ordered[:len(y_train)+L], y_noisy_o[:len(y_train)+L], label="Señal física")

plt.plot(t_test, y_test_u, label="Valor real (test)")

plt.plot(t_test, yhat_test_u, label="Predicción GRU (test)")

plt.plot(t_preds[1:], y_pred.squeeze(), label="Predicción futura")

plt.xlabel("t")

plt.ylabel("x(t)")

plt.title("Predicción one-step-ahead con GRU: oscilador amortiguado")

plt.legend()

plt.show()